The Alignment Tax Is Not a Law of Nature

There’s a moment in every model family’s life when something flips. Before that moment, getting smarter makes the model less honest. After it, getting smarter makes it more honest. We found that moment, measured it, and showed you can move it.

The Trade-off Everyone Assumes

Ask anyone in AI safety: “Does scaling make alignment harder?” Most will say yes. It’s treated as a law of nature — bigger models, bigger problems.

We measured it. Across 63 base models in 16 families, we tracked how reasoning (HellaSwag) and truthfulness (TruthfulQA) relate as models get bigger. Not whether each improves — whether they help or hurt each other.

The Phase Transition

Think of water freezing. Above 0°C, molecules move freely. Below, they lock into crystal. The physics doesn’t gradually change — it flips at a sharp boundary.

AI capabilities do the same thing. Below a critical scale, reasoning and truthfulness are anti-correlated (r = −0.989 in Pythia). Train the model to reason better, and it gets less truthful. This is the alignment tax. It’s real. Every web-trained family shows it.

But above that critical scale, the sign flips. Capabilities cooperate. Better reasoning = better truthfulness. No trade-off. The tax was a phase, not a law.

Tax Phase

Below Nc

Capabilities fight

γ₁₂ < 0

Transition

At Nc

Maximum leverage

γ₁₂ = 0

Bonus Phase

Above Nc

Capabilities cooperate

γ₁₂ > 0

It’s Not One Number

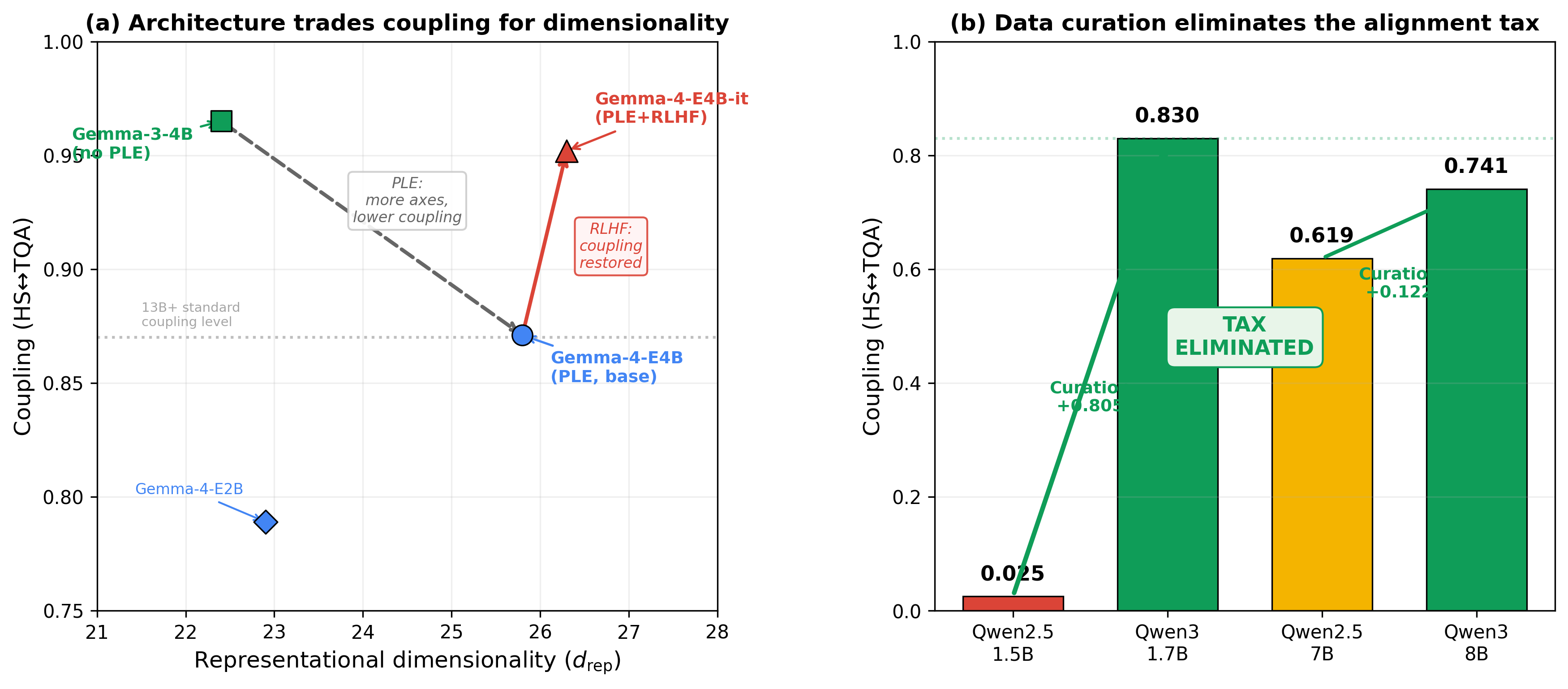

The critical scale Nc isn’t a universal constant. It’s a design parameter. OPT hits it at 0.12B. Pythia at 3.5B. Falcon at 7B. That’s a 60× range.

Even more interesting: curated models like Phi and Qwen3 bypass the tax entirely. Their Nc is effectively below the smallest model tested. Data curation doesn’t just improve quality — it moves the phase boundary. Phi at 1B achieves coupling characteristic of standard-trained 10B models.

Three levers shift Nc independently: data curation, model width, and architecture. Each is measurable. Each is actionable.

What This Looks Like at Frontier Scale

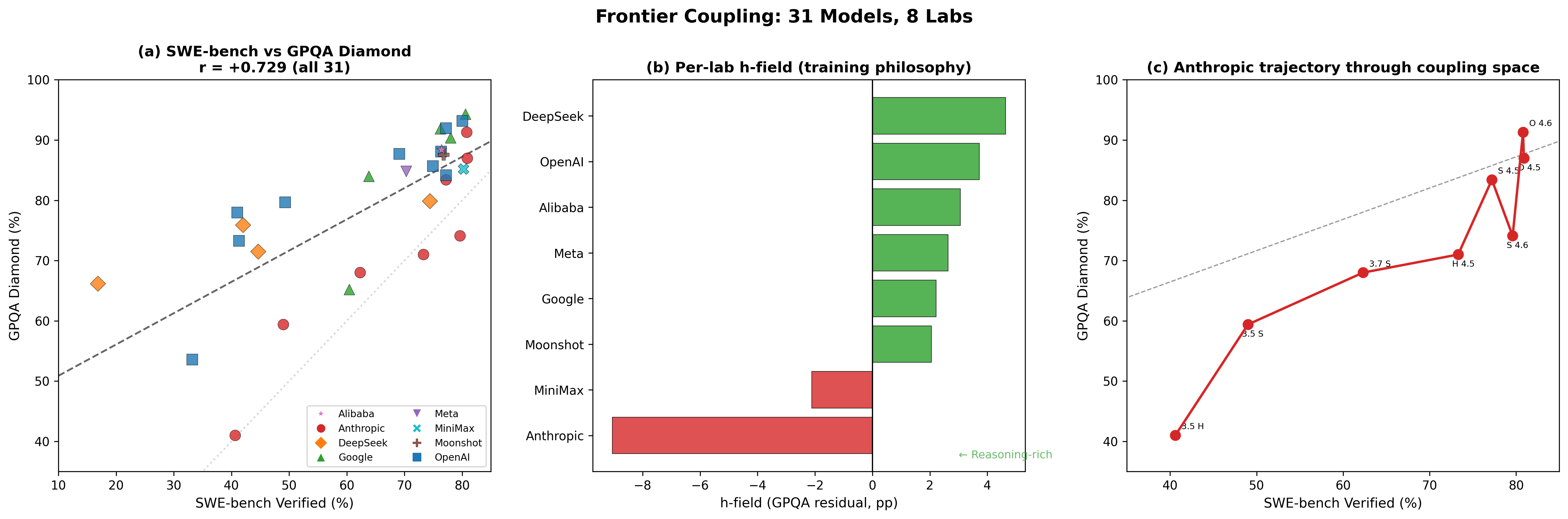

This is where Paper 3B picks up. At frontier scale — 39 models from 10 labs — we can’t measure HellaSwag vs TruthfulQA anymore (they’re saturated). But SWE-bench and GPQA Diamond are the new axes, and they cooperate too: r = +0.72.

The h-field is the key diagnostic. It’s simply how far each model deviates from the cooperation trend. Positive h = reasoning-rich. Negative h = coding-rich. One number tells you a model’s training philosophy.

In physics, the external magnetic field h breaks symmetry between spin-up and spin-down. In CAPE, the h-field is the external force — the training recipe — that pushes a model off the natural cooperation trend. A coding-heavy recipe pushes h negative (like a field favoring spin-down). A reasoning-heavy recipe pushes h positive. The field is external to the model’s intrinsic coupling — it’s what the lab chose, not what the architecture wants.

Google consistently invests in reasoning (h stays positive across releases). Anthropic is coding-rich (h = −6.9 on average) — but this isn’t permanent. When Sonnet 4.6 went deep into a coding excursion (h = −13.1), Opus 4.6 recovered to h = +3.5 at the next release. Tax excursions are temporary. The same pattern shows up at OpenAI (GPT-5.4 dips, GPT-5.2 Pro recovers) and Google (Flash→Pro excursion then recovery).

Coding-specialist releases create local tax excursions that recover at the next generation. The universality of this pattern across Anthropic, OpenAI, and Google — each with different architectures, data, and training recipes — is the strongest evidence that the coupling dynamics are fundamental, not lab-specific.

The Cascade: It Keeps Repeating

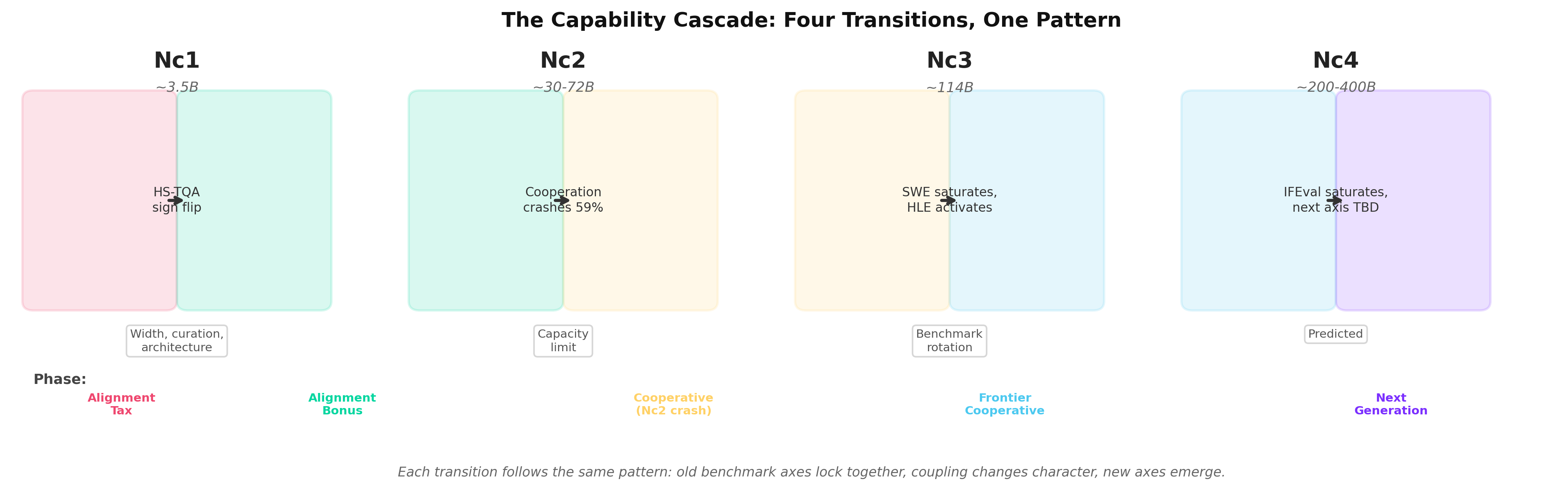

Here’s what surprised us most. The transition doesn’t happen once. It repeats at every scale, with different benchmarks each time:

Nc1 (~0.1–7B)

HS ↔ TQA coupling flips

Nc2 (~30–72B)

Internal coupling crashes 59%

SWE ↔ GPQA activate

Nc3 (~114B, predicted)

SWE saturates

IFEval ↔ HLE activate

Nc4 (~200B+, predicted)

IFEval saturates

Next axis TBD

At each level, the old benchmarks lock together (they stop discriminating), new ones emerge, and the whole tax-transition-bonus cycle starts fresh. Think of a child learning to walk — at first, balance and speed fight each other (the tax). Then they click, and speed helps balance (the bonus). Then the child starts running, and a new trade-off appears between speed and agility. Each level of mastery creates a new coupling that has to be resolved at the next level.

We measured this directly in OPT’s internal coupling: it rises from 0.514 (125M) to 0.876 (13B), then crashes to 0.356 at 30B — the same pattern as Nc1, repeating at Nc2. Same math. Different scale. Like harmonics of a vibrating string.

The Equation That Predicts Benchmarks

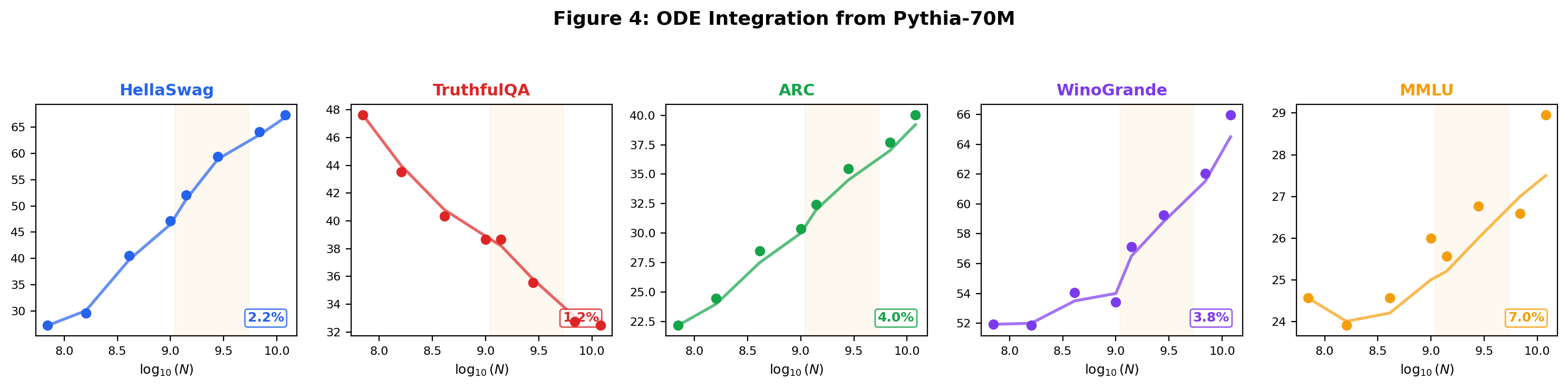

The coupling isn’t just a pattern we noticed. It’s governed by an ODE — a differential equation we discovered from data. Feed it one initial condition (Pythia-70M’s scores) and it predicts all 5 benchmarks across 8 model sizes. Then cross-predict a held-out family (Llama-2) at 5.6% MAE. No physics was assumed — the equation emerged from the data. But it has the same form as equations that govern phase transitions in superconductors.

Engineering: You Can Skip the Tax

The most practical finding: the alignment tax is eliminatable. Phi at 1B achieves coupling characteristic of standard-trained 10B models. Qwen3 at 1.7B has 100% cooperative heads where Qwen2.5 at 1.5B had 97% competing. One generation of curation erased the tax entirely.

What To Do With This

This isn’t just measurement. It’s actionable:

If you’re training below Nc: Don’t just scale. Curate. One unit of data quality ≈ 10× model scale in coupling improvement.

If you’re at Nc: You’re at the critical point. Small interventions have maximum leverage. This is where alignment ROI is highest.

If you’re deploying a frontier model: Compute the h-field from two public benchmark scores. It takes 30 seconds and tells you your model’s training bias. If |h| > 5, your model is a specialist — plan accordingly.

If you’re evaluating models: Watch the saturation ratio. When the top-5 models compress to <2pp spread on a benchmark, that benchmark is done. The next axis is already activating.

Seven Bets on the Table

We made 7 falsifiable predictions with timestamped deadlines. If we’re wrong, the framework breaks publicly. Three are already confirmed:

1. OLMo at γ₁₂ = 0.000 exactly (confirmed independently by AI2)

2. ODE cross-predicts Llama-2 at 5.6% MAE (2.6× better than polynomial)

3. Qwen3 cooperative at all scales (curation eliminated the tax)

The four remaining predictions test frontier dynamics: SWE saturation by Dec 2026, IFEval activation, lab trajectory persistence, and the Nc4 cascade. The dashboard tracks these live.

Try It

The CAPE Dashboard lets you enter any model’s benchmarks and get its phase, coupling trajectory, h-field, and ODE prediction. The cape-steer CLI lets you run activation-level alignment correction on any open-weight model.

The alignment tax is not a law of nature. It is an engineerable bottleneck — a phase that every model family grows out of, and that good engineering can skip entirely.

Papers: “Lying Is Just a Phase” (Paper 3A) and “The Growing Pains of Frontier Models” (Paper 3B) — NeurIPS 2026. Code and data at github.com/adilamin89/cape-scaling.

Contact: [email protected]